One day back in 2013 I was driving around Waco with an eye out for rough or abandoned looking houses

that I might be able to buy and fix.

Waco being what it was at the time, I found lots of them. I sent out a couple of dozen letters. One of those letters went to an address in Los Angeles,

and I got a call a week later. Mike's father had passed away and left him with a few houses in Waco that he didn't know what to do with. He had actually

tried to rent them out, but they were in rough shape and being 2,000 miles away didn't make repairs any easier. He also had fallen behind on the mortgages for

two of them. We made a deal, and I quickly got to work on repairs.

This is a pretty typical story of so-called driving for dollars: something a lot of real estate investors and flippers do to find a deal. But what if I could start with a list of absentee owners work backwards? Getting to skip all the driving around is pretty appealing, and I would only be contacting property owners who live out of state-- a group who are much more likely to want to sell.

Next question is, how do you find a list like that? I suppose you could call around to mailing list brokers, but how do you know where they got the data? How accurate is it? And the numbers of people on such a list wouldn't be that large in your target zip codes, so you might wind up buying a list for the whole state just to meet the minimum purchase.

Fortunately, real estate ownership is public record, and most counties post it on the internet via the tax appraisal web site. Great! Now you can just do a quick search and you'll have your list in no time, right? Not so fast! Most CAD (county appraisal district) sites allow you to search by owner name, property address, and that's about it. Want to filter by living area? Nope. Want to sort by owner's mailing address? Out of luck. What if you wanted a list of people who own more than seven houses in your county? Take a hike.

All of that data is on the site-- it is accessible to the public. But it is not searchable. The best you can do is to type a random letter of the alphabet into the name search box and then click through all the listings manually.

Getting the data

I began to wonder if there was a way to automate this, and following the old maxim that when all you have is a hammer everything looks like a nail, I started banging out some Python code using the Flask framework. Python because it contains the "requests" library which allows you to download a web page, and Flask because it gives you the ability to make a web page front end for your app (say I wanted to build a control panel for my project).

Getting the data wouldn't be too hard. I could follow the same workflow as what I hinted at above: enter a random letter of the alphabet and then click on any random property record. Do this 100,000 times or 1 million times and you will eventually have the entire county, depending on its size. Although the Python script is probably capable of sending multiple requests per second, I would definitely not want to push the limits. Imagine if you were the website owner and somebody started hammering you with traffic that far exceeds what you normally get. Python's built in "sleep()" function lets you pause between requests. As a rule of thumb, I probably wouldn't want to send more than one per minute, which is on the order of what a human user would do.

Saving the data

Let's add a step between scraping the data placing it into a MySQL database where we can run queries on it: save the raw HTML to a text file on your hard drive. Remember that every property record is itself a webpage, and webpages are subject to change at any time. Your parsing scripts might keep on looking for a tag that says owner_name, but who knows if the website changed it to "owner_name1", for example. Saving the raw HTML lets you go back and fix it later if you notice any problems.

This is a pretty typical story of so-called driving for dollars: something a lot of real estate investors and flippers do to find a deal. But what if I could start with a list of absentee owners work backwards? Getting to skip all the driving around is pretty appealing, and I would only be contacting property owners who live out of state-- a group who are much more likely to want to sell.

Next question is, how do you find a list like that? I suppose you could call around to mailing list brokers, but how do you know where they got the data? How accurate is it? And the numbers of people on such a list wouldn't be that large in your target zip codes, so you might wind up buying a list for the whole state just to meet the minimum purchase.

Fortunately, real estate ownership is public record, and most counties post it on the internet via the tax appraisal web site. Great! Now you can just do a quick search and you'll have your list in no time, right? Not so fast! Most CAD (county appraisal district) sites allow you to search by owner name, property address, and that's about it. Want to filter by living area? Nope. Want to sort by owner's mailing address? Out of luck. What if you wanted a list of people who own more than seven houses in your county? Take a hike.

All of that data is on the site-- it is accessible to the public. But it is not searchable. The best you can do is to type a random letter of the alphabet into the name search box and then click through all the listings manually.

Getting the data

I began to wonder if there was a way to automate this, and following the old maxim that when all you have is a hammer everything looks like a nail, I started banging out some Python code using the Flask framework. Python because it contains the "requests" library which allows you to download a web page, and Flask because it gives you the ability to make a web page front end for your app (say I wanted to build a control panel for my project).

Getting the data wouldn't be too hard. I could follow the same workflow as what I hinted at above: enter a random letter of the alphabet and then click on any random property record. Do this 100,000 times or 1 million times and you will eventually have the entire county, depending on its size. Although the Python script is probably capable of sending multiple requests per second, I would definitely not want to push the limits. Imagine if you were the website owner and somebody started hammering you with traffic that far exceeds what you normally get. Python's built in "sleep()" function lets you pause between requests. As a rule of thumb, I probably wouldn't want to send more than one per minute, which is on the order of what a human user would do.

Saving the data

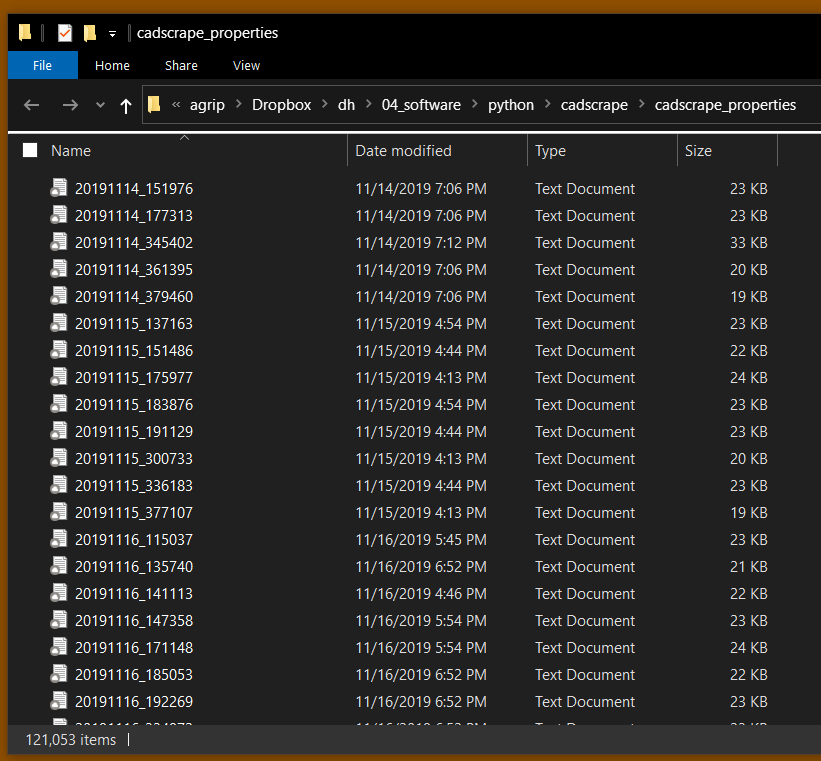

Let's add a step between scraping the data placing it into a MySQL database where we can run queries on it: save the raw HTML to a text file on your hard drive. Remember that every property record is itself a webpage, and webpages are subject to change at any time. Your parsing scripts might keep on looking for a tag that says owner_name, but who knows if the website changed it to "owner_name1", for example. Saving the raw HTML lets you go back and fix it later if you notice any problems.

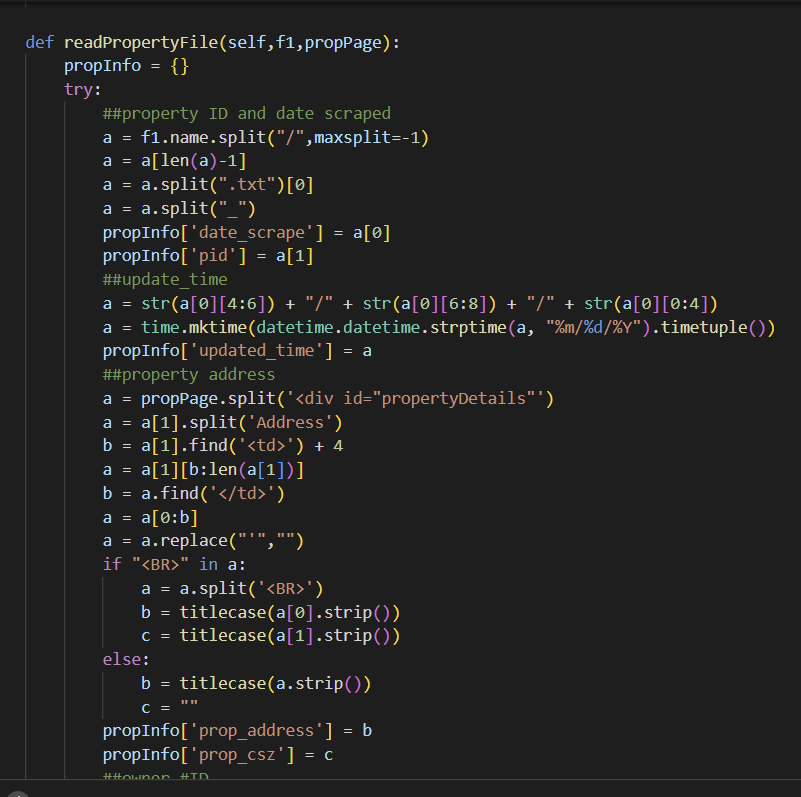

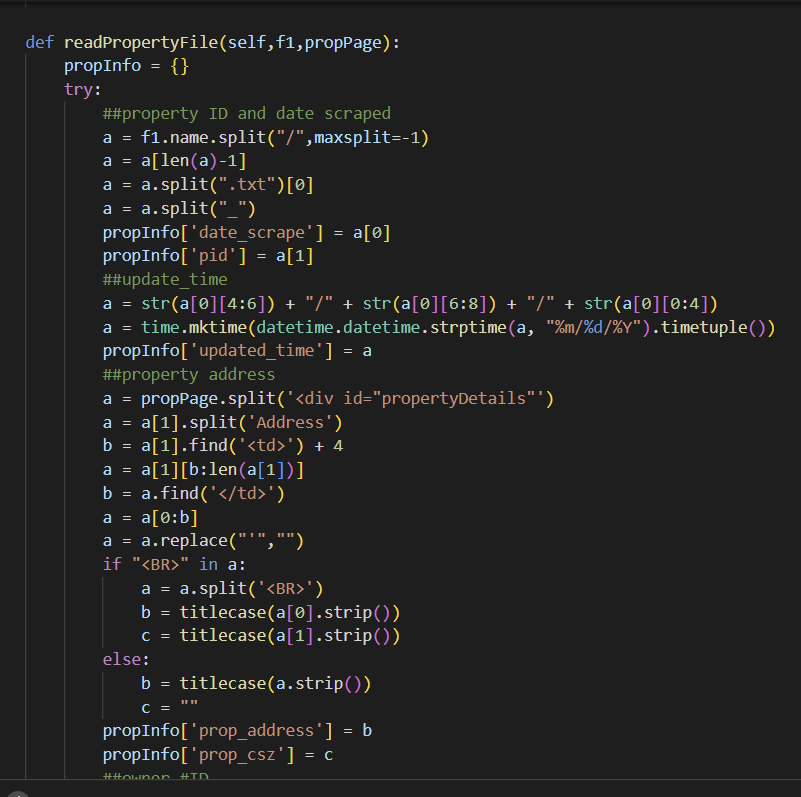

Parsing the data

Now that you have a folder full of text files, the next thing to do is write a script that can drill down on the specific data you want, like names, mailing addresses, deed histories, and so on. Python is pretty good for this too. Write a loop that cycles though all the files, and then split apart each text file using the HTML tags. The code builds a Python dictionary data structure with the information for each property page.

Running queries

Now that you have all 120,000 and some records in a MySQL database, you can run some queries! How about a few examples:

Q. How many properties are there in McLennan county?

A. 121,052

Q. How many residential properties?

A. 71,605

Q. How many of those have an owner mailing address in Texas?

A. 70,243

Q. How many residential properties are there with an owner mailing address NOT in Texas?

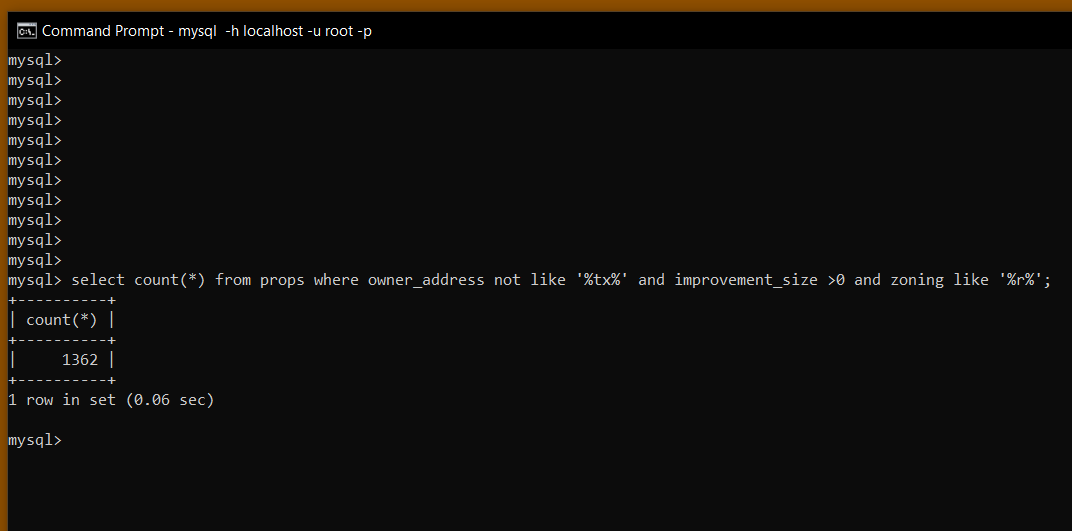

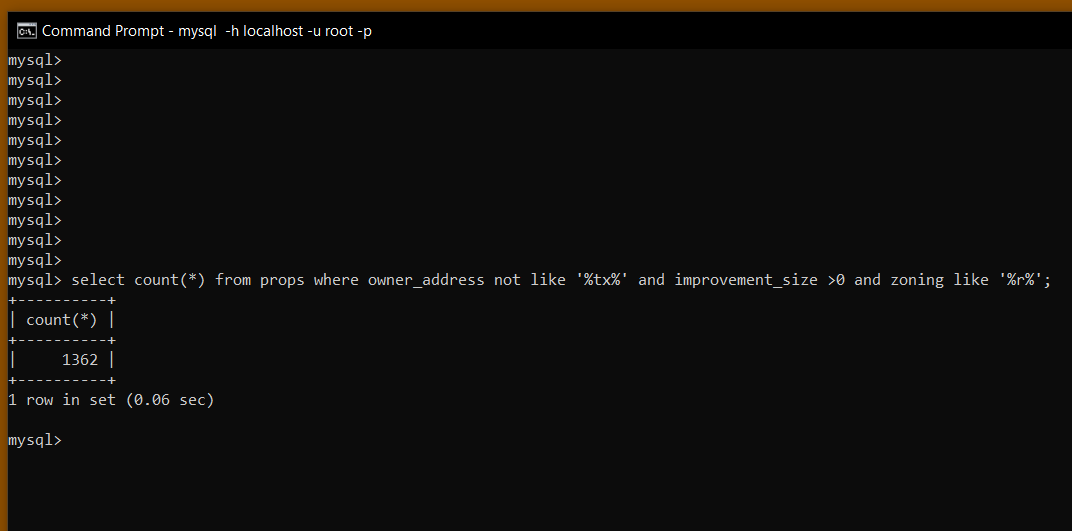

A. 1,362 (example screenshot below)

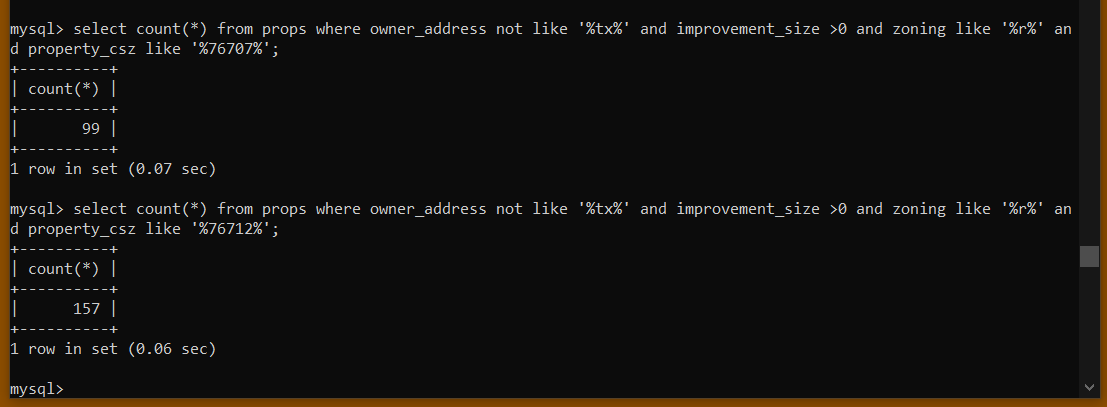

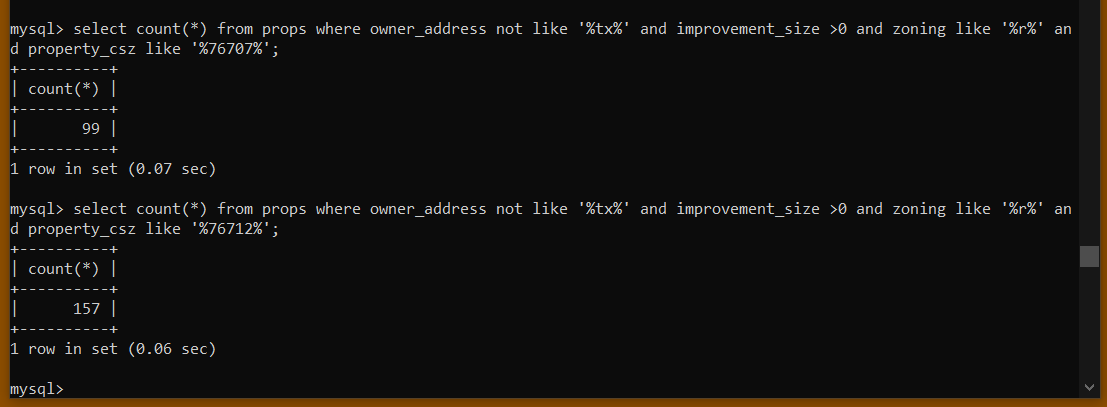

Q. How many of those properties are located in zip code 76707? What about 76712?

A. 99 and 157, respectively (screenshot)

Now that you have a folder full of text files, the next thing to do is write a script that can drill down on the specific data you want, like names, mailing addresses, deed histories, and so on. Python is pretty good for this too. Write a loop that cycles though all the files, and then split apart each text file using the HTML tags. The code builds a Python dictionary data structure with the information for each property page.

Running queries

Now that you have all 120,000 and some records in a MySQL database, you can run some queries! How about a few examples:

Q. How many properties are there in McLennan county?

A. 121,052

Q. How many residential properties?

A. 71,605

Q. How many of those have an owner mailing address in Texas?

A. 70,243

Q. How many residential properties are there with an owner mailing address NOT in Texas?

A. 1,362 (example screenshot below)

Q. How many of those properties are located in zip code 76707? What about 76712?

A. 99 and 157, respectively (screenshot)